#SBATCH -cpus-per-task=1 # cpu-cores per task (>1 if multi-threaded tasks)

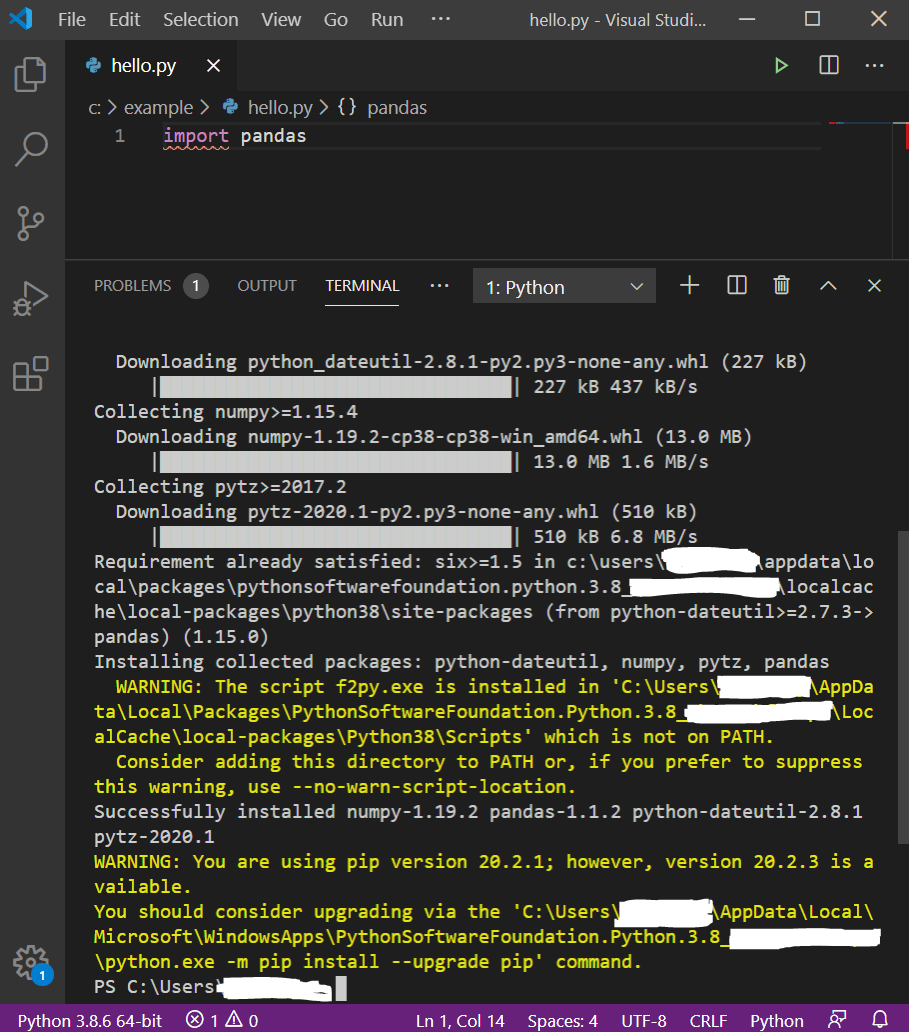

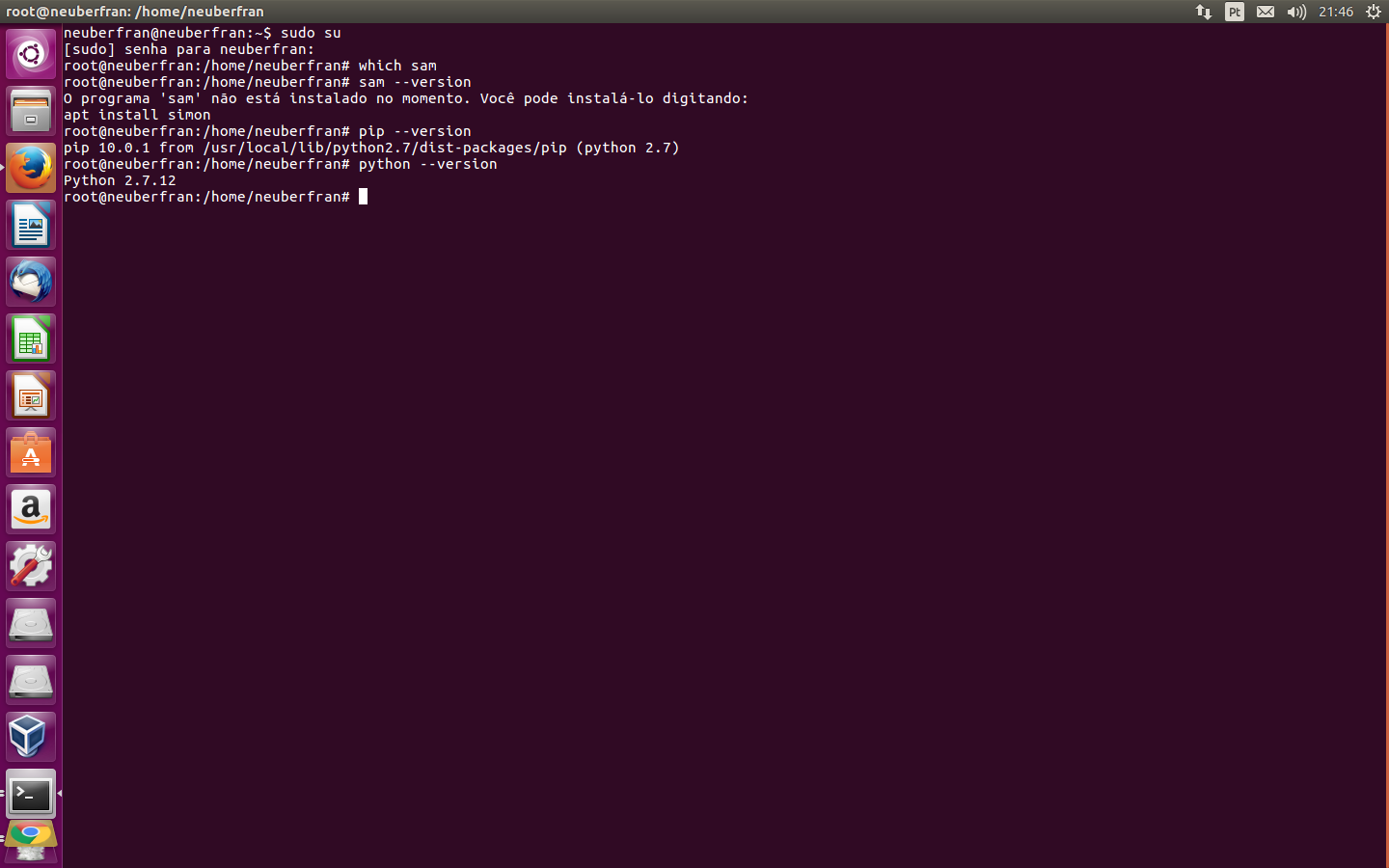

#SBATCH -ntasks=1 # total number of tasks across all nodes #SBATCH -job-name=py-job # create a short name for your job Below is a sample Slurm script (job.slurm): On the command line, use conda deactivate to leave the active environment and return to the base environment. Consider replacing myenv with an environment name that is more specific to your work. $ conda create -name ml-env scikit-learn pandas matplotlib -channel conda-forgeĮach package and its dependencies will be installed locally in ~/.conda. If you don't want to spend the time to read this entire page (not recommended) then try the following procedure to install your package(s) (below we assume Python 3): Commands preceded by the $ character are to be run on the command line. Angular brackets denote command line options that you should replace with a value specific to your work.

This guide presents an overview of installing Python packages and running Python scripts on the HPC clusters. Packaging and Distributing Your Own Python Package.Isolated Python Environments with virtualenv.Office of Information Technology Senior Management.Scientific Computing Administrators Meeting.Operations Research and Financial Engineering.Center for Statistics & Machine Learning.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed